Kernel Planet

April 06, 2026

As most of you are painfully aware, Linux Plumbers Conference registrations can run out very fast (yes, we got lots of complaints last year). This year, we’re taking a couple of steps to alleviate the issue. Firstly, we’re expanding the venue size in Prague to match the number of attendees we got in Vienna (800) which will hopefully mean we have more than enough places to keep registration open all the way up to the beginning of the conference. Secondly, we’re going to have an pre-registration period starting two weeks before general registration opens for anyone who submits content. The way this will work is that if you submit anything via indico before general registration opens, you’ll receive a voucher and instructions to participate (this applies to every track and MC submission regardless of the accept/reject or pending state). The cost will be the same as general registration (US$600) but you’ll be under no obligation to take up the voucher, which will expire when general registration opens. We’re aligning the acceptance/rejection notices of the tracks we directly control (Refereed and Kernel Summit) to be complete around the time we open pre-registration. However, for other tracks and MC submissions that aren’t aligned, if you take up an early registration voucher but are subsequently offered a free pass, we’ll refund it (although if your company pays, we’d appreciate not having to since cvent charges us).

As a reminder of free pass distribution: every accepted Track Speaker (Refereed, Kernel Summit, Net, BPF and Toolchain) gets a free pass. However, Microconferences operate differently and accepted Microconference discussion leads may not receive a free pass (Microconferences have two free passes each and can distribute them arbitrarily to encourage key attendees).

The anticipated date for the opening of early registration is Friday 10 July 2026, but remember this may change due to logistical problems with the cvent website (which we don’t control).

April 06, 2026 04:13 PM

April 01, 2026

I'm doing a podcast recording this week, so I wanted to run some numbers so I could have some facts rather than feels. It turns out my feels were off by a factor of 3 or so.

If asked, I've always said the contributor count to the drm subsystem is probably in the 100 or so developers per release cycle.

Did the simplest:

git log --format='%aN' v6.14..v6.15 drivers/gpu/drm/ include/uapi/drm/ include/drm/ | sort -u | wc -l

Iterated over a few kernel releases

v6.15 326

v6.16 322

v6.17 300

v6.18 334

v6.19 332

v7.0-rc6 346

The number for the complete kernel in those scenarios are ~2000 usually, which means drm subsystem has around 15-16% of the kernel contributors.

I'm a bit spun out, that's quite a lot of people. I think I'll blame Sima for it. This also explains why I'm a bit out of touch with the process problems other maintainers have, and when I say stuff like a lot of workflows don't scale, this is what I mean.

April 01, 2026 08:59 PM

Because I am bad at giving up on things, I’ve been running my own email

server for over 20 years. Some of that time it’s been a PC at the end of a

DSL line, some of that time it’s been a Mac Mini in a data centre, and some

of that time it’s been a hosted VM. Last year I decided to bring it in

house, and since then I’ve been gradually consolidating as much of the rest

of my online presence as possible on it. I mentioned this on

Mastodon and a

couple of people asked for more details, so here we are.

First: my ISP doesn’t guarantee a static

IPv4 unless I’m on a business plan and that seems like it’d cost a bunch

more, so I’m doing what I described

here: running a Wireguard link

between a box that sits in a cupboard in my living room and the smallest

OVH instance I can, with an additional IP

address allocated to the VM and NATted over the VPN link. The practical

outcome of this is that my home IP address is irrelevant and can change as

much as it wants - my DNS points at the OVH IP, and traffic to that all ends

up hitting my server.

The server itself is pretty uninteresting. It’s a refurbished HP EliteDesk

which idles at 10W or so, along 2TB of NVMe and 32GB of RAM that I found

under a pile of laptops in my office. We’re not talking rackmount Xeon

levels of performance, but it’s entirely adequate for everything I’m doing

here.

So. Let’s talk about the services I’m hosting.

Web

This one’s trivial. I’m not really hosting much of a website right now, but

what there is is served via Apache with a Let’s Encrypt certificate. Nothing

interesting at all here, other than the proxying that’s going to be relevant

later.

Email

Inbound email is easy enough. I’m running Postfix with a pretty stock

configuration, and my MX records point at me. The same Let’s Encrypt

certificate is there for TLS delivery. I’m using Dovecot as an IMAP server

(again with the same cert). You can find plenty of guides on setting this

up.

Outbound email? That’s harder. I’m on a residential IP address, so if I send

email directly nobody’s going to deliver it. Going via my OVH address isn’t

going to be a lot better. I have a Google Workspace, so in the end I just

made use of Google’s SMTP relay

service. There’s

various commerical alternatives available, I just chose this one because it

didn’t cost me anything more than I’m already paying.

Blog

My blog is largely static content generated by

Hugo. Comments are Remark42

running in a Docker container. If you don’t want to handle even that level

of dynamic content you can use a third party comment provider like

Disqus.

Mastodon

I’m deploying Mastodon pretty much along the lines of the upstream compose

file. Apache

is proxying /api/v1/streaming to the websocket provided by the streaming

container and / to the actual Mastodon service. The only thing I tripped

over for a while was the need to set the “X-Forwarded-Proto” header since

otherwise you get stuck in a redirect loop of Mastodon receiving a request

over http (because TLS termination is being done by the Apache proxy) and

redirecting to https, except that’s where we just came from.

Mastodon is easily the heaviest part of all of this, using around 5GB of RAM

and 60GB of disk for an instance with 3 users. This is more a point of

principle than an especially good idea.

Bluesky

I’m arguably cheating here. Bluesky’s federation model is quite different to

Mastodon - while running a Mastodon service implies running the webview and

other infrastructure associated with it, Bluesky has split that into

multiple

parts. User

data is stored on Personal Data Servers, then aggregated from those by

Relays, and then displayed on Appviews. Third parties can run any of these,

but a user’s actual posts are stored on a PDS. There are various reasons to

run the others, for instance to implement alternative moderation policies,

but if all you want is to ensure that you have control over your data,

running a PDS is sufficient. I followed these

instructions,

other than using Apache as the frontend proxy rather than nginx, and it’s

all been working fine since then. In terms of ensuring that my data remains

under my control, it’s sufficient.

Backups

I’m using borgmatic, backing up to a local

Synology NAS and also to my parents’ home (where I have another HP EliteDesk

set up with an equivalent OVH IPv4 fronting setup). At some point I’ll check

that I’m actually able to restore them.

Conclusion

Most of what I post is now stored on a system that’s happily living under a

TV, but is available to the rest of the world just as visibly as if I used a

hosted provider. Is this necessary? No. Does it improve my life? In no

practical way. Does it generate additional complexity? Absolutely. Should

you do it? Oh good heavens no. But you can, and once it’s working it largely

just keeps working, and there’s a certain sense of comfort in knowing that

my online presence is carefully contained in a small box making a gentle

whirring noise.

April 01, 2026 02:35 AM

March 23, 2026

Submissions for the Refereed Track, Kernel Summit, BoF and Microconferences are now open. Linux Plumbers will be held this year in Prague, Czechia from October 5-7th.

The Refereed presentations are 45 minutes in length (Talk+Q&A) and should focus on a specific aspect of the “plumbing” in a Linux ecosystem. Examples of Linux plumbing include core kernel subsystems, init systems, core libraries, toolchains, windowing systems, management tools, device support, media creation/playback, testing, and so on. The best presentations are not about finished work, but rather problem statements, proposals, or proof-of-concept solutions that require face-to-face discussions and debate.

The Kernel Summit track is divided into sessions of 45 minutes. Sessions may be focused on the discussion of specific Linux kernel topics which can be better resolved in person than over e-mail. The program committee will also consider “information sharing” topics if they are clearly of interest to the wider development community (i.e., advanced training in topics that would be useful to kernel developers). In addition to submitting proposals to the Linux Plumber website, please also send a copy of the proposal as an e-mail to the ksummit@lists.linux.dev mailing list with a subject prefix of [TECH TOPIC].

The Microconferences are 3 and a half hours of technical discussion, broken up into 15 to 30 minute subtopics. The only presentations allowed are those that are needed to bring the audience up to speed and should not last more than half the allotted time for the subtopic. To submit a Microconference, provide a topic, some examples of subtopics to be discussed and a list of key people that should be present to have meaningful discussions. For Microconferences that have been to Linux Plumbers in the past, they should provide a list of accomplishments that were a direct result of the discussions from their previous sessions (with links to patches and such).

Presenters and Microconference subtopic leads should be physically present at the conference. Remote presentations will be allowed strictly on an emergency basis.

The Microconference Track submissions end at 11:59PM UTC on Thursday, April 23, 2026, submissions for subtopics within a Microconference will open shortly after the Microconference has been fully accepted.

The Refereed Track and Kernel Summit submissions end at 11:59PM UTC on Sunday, June 28, 2026.

Please submit your Refereed Track, Kernel Summit, BoF or Microconference topic. We are looking forward to seeing the great content that is submitted that makes Linux Plumbers the best technical conference there is.

March 23, 2026 08:51 PM

March 21, 2026

When you’re looking at source code it can be helpful to have some evidence

indicating who wrote it. Author tags give a surface level indication, but

it turns out you can just

lie

and if someone isn’t paying attention when merging stuff there’s certainly a

risk that a commit could be merged with an author field that doesn’t

represent reality. Account compromise can make this even worse - a PR being

opened by a compromised user is going to be hard to distinguish from the

authentic user. In a world where supply chain security is an increasing

concern, it’s easy to understand why people would want more evidence that

code was actually written by the person it’s attributed to.

git has support for cryptographically signing

commits and tags. Because git is about choice even if Linux isn’t, you can

do this signing with OpenPGP keys, X.509 certificates, or SSH keys. You’re

probably going to be unsurprised about my feelings around OpenPGP and the

web of trust, and X.509 certificates are an absolute nightmare. That leaves

SSH keys, but bare cryptographic keys aren’t terribly helpful in isolation -

you need some way to make a determination about which keys you trust. If

you’re using someting like GitHub you can extract that

information from the set of keys associated with a user account, but

that means that a compromised GitHub account is now also a way to alter the

set of trusted keys and also when was the last time you audited your keys

and how certain are you that every trusted key there is still 100% under

your control? Surely there’s a better way.

SSH Certificates

And, thankfully, there is. OpenSSH supports

certificates, an SSH public key that’s been signed by some trusted party and

so now you can assert that it’s trustworthy in some form. SSH Certificates

also contain metadata in the form of Principals, a list of identities that

the trusted party included in the certificate. These might simply be

usernames, but they might also provide information about group

membership. There’s also, unsurprisingly, native support in SSH for

forwarding them (using the agent forwarding protocol), so you can keep your

keys on your local system, ssh into your actual dev system, and have access

to them without any additional complexity.

And, wonderfully, you can use them in git! Let’s find out how.

Local config

There’s two main parameters you need to set. First,

1

|

git config set gpg.format ssh

|

because unfortunately for historical reasons all the git signing config is

under the gpg namespace even if you’re not using OpenPGP. Yes, this makes

me sad. But you’re also going to need something else. Either

user.signingkey needs to be set to the path of your certificate, or you

need to set gpg.ssh.defaultKeyCommand to a command that will talk to an

SSH agent and find the certificate for you (this can be helpful if it’s

stored on a smartcard or something rather than on disk). Thankfully for you,

I’ve written one. It will

talk to an SSH agent (either whatever’s pointed at by the SSH_AUTH_SOCK

environment variable or with the -agent argument), find a certificate

signed with the key provided with the -ca argument, and then pass that

back to git. Now you can simply pass -S to git commit and various other

commands, and you’ll have a signature.

Validating signatures

This is a bit more annoying. Using native git tooling ends up calling out to

ssh-keygen, which validates signatures against a file in a format

that looks somewhat like authorized-keys. This lets you add something like:

1

|

* cert-authority ssh-rsa AAAA…

|

which will match all principals (the wildcard) and succeed if the signature

is made with a certificate that’s signed by the key following

cert-authority. I recommend you don’t read the code that does this in

git

because I made that mistake myself, but it does work. Unfortunately it

doesn’t provide a lot of granularity around things like “Does the

certificate need to be valid at this specific time” and “Should the user

only be able to modify specific files” and that kind of thing, but also if

you’re using GitHub or GitLab you wouldn’t need to do this at all because

they’ll just do this magically and put a “verified” tag against anything

with a valid signature, right?

Haha. No.

Unfortunately while both GitHub and GitLab support using SSH certificates

for authentication (so a user can’t push to a repo unless they have a

certificate signed by the configured CA), there’s currently no way to say

“Trust all commits with an SSH certificate signed by this CA”. I am unclear

on why. So, I wrote my

own. It takes a range of

commits, and verifies that each one is signed with either a certificate

signed by the key in CA_PUB_KEY or (optionally) an OpenPGP key provided in

ALLOWED_PGP_KEYS. Why OpenPGP? Because even if you sign all of your own

commits with an SSH certificate, anyone using the API or web interface will

end up with their commits signed by an OpenPGP key, and if you want to have

those commits validate you’ll need to handle that.

In any case, this should be easy enough to integrate into whatever CI

pipeline you have. This is currently very much a proof of concept and I

wouldn’t recommend deploying it anywhere, but I am interested in merging

support for additional policy around things like expiry dates or group

membership.

Doing it in hardware

Of course, certificates don’t buy you any additional security if an attacker

is able to steal your private key material - they can steal the certificate

at the same time. This can be avoided on almost all modern hardware by

storing the private key in a separate cryptographic coprocessor - a Trusted

Platform Module on

PCs, or the Secure

Enclave

on Macs. If you’re on a Mac then Secretive has

been around for some time, but things are a little harder on Windows and

Linux - there’s various things you can do with

PKCS#11 but you’ll hate yourself

even more than you’ll hate me for suggesting it in the first place, and

there’s ssh-tpm-agent except

it’s Linux only and quite tied to Linux.

So, obviously, I wrote my

own. This makes use of the

go-attestation library my team

at Google wrote, and is able to generate TPM-backed keys and export them

over the SSH agent protocol. It’s also able to proxy requests back to an

existing agent, so you can just have it take care of your TPM-backed keys

and continue using your existing agent for everything else. In theory it

should also work on Windows but this is all in preparation for a

talk I only found out I was giving about two weeks

beforehand, so I haven’t actually had time to test anything other than that

it builds.

And, delightfully, because the agent protocol doesn’t care about where the

keys are actually stored, this still works just fine with forwarding - you

can ssh into a remote system and sign something using a private key that’s

stored in your local TPM or Secure Enclave. Remote use can be as transparent

as local use.

Wait, attestation?

Ah yes you may be wondering why I’m using go-attestation and why the term

“attestation” is in my agent’s name. It’s because when I’m generating the

key I’m also generating all the artifacts required to prove that the key was

generated on a particular TPM. I haven’t actually implemented the other end

of that yet, but if implemented this would allow you to verify that a key

was generated in hardware before you issue it with an SSH certificate - and

in an age of agentic bots accidentally exfiltrating whatever they find on

disk, that gives you a lot more confidence that a commit was signed on

hardware you own.

Conclusion

Using SSH certificates for git commit signing is great - the tooling is a

bit rough but otherwise they’re basically better than every other

alternative, and also if you already have infrastructure for issuing SSH

certificates then you can just reuse it and everyone wins.

March 21, 2026 07:38 PM

March 03, 2026

A lot of hardware runs non-free software. Sometimes that non-free software is in ROM. Sometimes it’s in flash. Sometimes it’s not stored on the device at all, it’s pushed into it at runtime by another piece of hardware or by the operating system. We typically refer to this software as “firmware” to differentiate it from the software run on the CPU after the OS has started, but a lot of it (and, these days, probably most of it) is software written in C or some other systems programming language and targeting Arm or RISC-V or maybe MIPS and even sometimes x86. There’s no real distinction between it and any other bit of software you run, except it’s generally not run within the context of the OS. Anyway. It’s code. I’m going to simplify things here and stop using the words “software” or “firmware” and just say “code” instead, because that way we don’t need to worry about semantics.

A fundamental problem for free software enthusiasts is that almost all of the code we’re talking about here is non-free. In some cases, it’s cryptographically signed in a way that makes it difficult or impossible to replace it with free code. In some cases it’s even encrypted, such that even examining the code is impossible. But because it’s code, sometimes the vendor responsible for it will provide updates, and now you get to choose whether or not to apply those updates.

I’m now going to present some things to consider. These are not in any particular order and are not intended to form any sort of argument in themselves, but are representative of the opinions you will get from various people and I would like you to read these, think about them, and come to your own set of opinions before I tell you what my opinion is.

THINGS TO CONSIDER

-

Does this blob do what it claims to do? Does it suddenly introduce functionality you don’t want? Does it introduce security flaws? Does it introduce deliberate backdoors? Does it make your life better or worse?

-

You’re almost certainly being provided with a blob of compiled code, with no source code available. You can’t just diff the source files, satisfy yourself that they’re fine, and then install them. To be fair, even though you (as someone reading this) are probably more capable of doing that than the average human, you’re likely not doing that even if you are capable because you’re also likely installing kernel upgrades that contain vast quantities of code beyond your ability to understand. We don’t rely on our personal ability, we rely on the ability of those around us to do that validation, and we rely on an existing (possibly transitive) trust relationship with those involved. You don’t know the people who created this blob, you likely don’t know people who do know the people who created this blob, these people probably don’t have an online presence that gives you more insight. Why should you trust them?

-

If it’s in ROM and it turns out to be hostile then nobody can fix it ever

-

The people creating these blobs largely work for the same company that built the hardware in the first place. When they built that hardware they could have backdoored it in any number of ways. And if the hardware has a built-in copy of the code it runs, why do you trust that that copy isn’t backdoored? Maybe it isn’t and updates would introduce a backdoor, but in that case if you buy new hardware that runs new code aren’t you putting yourself at the same risk?

-

Designing hardware where you’re able to provide updated code and nobody else can is just a dick move. We shouldn’t encourage vendors who do that.

-

Humans are bad at writing code, and code running on ancilliary hardware is no exception. It contains bugs. These bugs are sometimes very bad. This paper describes a set of vulnerabilities identified in code running on SSDs that made it possible to bypass encryption secrets. The SSD vendors released updates that fixed these issues. If the code couldn’t be replaced then anyone relying on those security features would need to replace the hardware.

-

Even if blobs are signed and can’t easily be replaced, the ones that aren’t encrypted can still be examined. The SSD vulnerabilities above were identifiable because researchers were able to reverse engineer the updates. It can be more annoying to audit binary code than source code, but it’s still possible.

-

Vulnerabilities in code running on other hardware can still compromise the OS. If someone can compromise the code running on your wifi card then if you don’t have a strong IOMMU setup they’re going to be able to overwrite your running OS.

-

Replacing one non-free blob with another non-free blob increases the total number of non-free blobs involved in the whole system, but doesn’t increase the number that are actually executing at any point in time.

Ok we’re done with the things to consider. Please spend a few seconds thinking about what the tradeoffs are here and what your feelings are. Proceed when ready.

I trust my CPU vendor. I don’t trust my CPU vendor because I want to, I trust my CPU vendor because I have no choice. I don’t think it’s likely that my CPU vendor has designed a CPU that identifies when I’m generating cryptographic keys and biases the RNG output so my keys are significantly weaker than they look, but it’s not literally impossible. I generate keys on it anyway, because what choice do I have? At some point I will buy a new laptop because Electron will no longer fit in 32GB of RAM and I will have to make the same affirmation of trust, because the alternative is that I just don’t have a computer. And in any case, I will be communicating with other people who generated their keys on CPUs I have no control over, and I will also be relying on them to be trustworthy. If I refuse to trust my CPU then I don’t get to computer, and if I don’t get to computer then I will be sad. I suspect I’m not alone here.

Why would I install a code update on my CPU when my CPU’s job is to run my code in the first place? Because it turns out that CPUs are complicated and messy and they have their own bugs, and those bugs may be functional (for example, some performance counter functionality was broken on Sandybridge at release, and was then fixed with a microcode blob update) and if you update it your hardware works better. Or it might be that you’re running a CPU with speculative execution bugs and there’s a microcode update that provides a mitigation for that even if your CPU is slower when you enable it, but at least now you can run virtual machines without code in those virtual machines being able to reach outside the hypervisor boundary and extract secrets from other contexts. When it’s put that way, why would I not install the update?

And the straightforward answer is that theoretically it could include new code that doesn’t act in my interests, either deliberately or not. And, yes, this is theoretically possible. Of course, if you don’t trust your CPU vendor, why are you buying CPUs from them, but well maybe they’ve been corrupted (in which case don’t buy any new CPUs from them either) or maybe they’ve just introduced a new vulnerability by accident, and also you’re in a position to determine whether the alleged security improvements matter to you at all. Do you care about speculative execution attacks if all software running on your system is trustworthy? Probably not! Do you need to update a blob that fixes something you don’t care about and which might introduce some sort of vulnerability? Seems like no!

But there’s a difference between a recommendation for a fully informed device owner who has a full understanding of threats, and a recommendation for an average user who just wants their computer to work and to not be ransomwared. A code update on a wifi card may introduce a backdoor, or it may fix the ability for someone to compromise your machine with a hostile access point. Most people are just not going to be in a position to figure out which is more likely, and there’s no single answer that’s correct for everyone. What we do know is that where vulnerabilities in this sort of code have been discovered, updates have tended to fix them - but nobody has flagged such an update as a real-world vector for system compromise.

My personal opinion? You should make your own mind up, but also you shouldn’t impose that choice on others, because your threat model is not necessarily their threat model. Code updates are a reasonable default, but they shouldn’t be unilaterally imposed, and nor should they be blocked outright. And the best way to shift the balance of power away from vendors who insist on distributing non-free blobs is to demonstrate the benefits gained from them being free - a vendor who ships free code on their system enables their customers to improve their code and enable new functionality and make their hardware more attractive.

It’s impossible to say with absolute certainty that your security will be improved by installing code blobs. It’s also impossible to say with absolute certainty that it won’t. So far evidence tends to support the idea that most updates that claim to fix security issues do, and there’s not a lot of evidence to support the idea that updates add new backdoors. Overall I’d say that providing the updates is likely the right default for most users - and that that should never be strongly enforced, because people should be allowed to define their own security model, and whatever set of threats I’m worried about, someone else may have a good reason to focus on different ones.

March 03, 2026 03:09 AM

February 19, 2026

Jonny Dyer:

ODCs (Orbital Data Centers — zaitcev) will happen. The incentives are aligned from too many directions for them not to. But if you’re still debating whether datacenters in space “make sense,” you’ve missed the point.

The real story is a technology revolution hiding in plain sight. Access to space has transformed over the last decade. Space and terrestrial infrastructure are converging into a single global system. ... Whether any particular infrastructure bet succeeds in the near term matters far less than the fact that the underlying transformation is structural and self-reinforcing.

February 19, 2026 02:55 AM

Someone wrote about the collapse of MinIO (as an open-source project):

The CNCF badge isn’t a safety net. MinIO was a CNCF-associated project. That association didn’t prevent any of this. The CNCF doesn’t control the licensing or business decisions of associated projects. If your risk model assumes that CNCF membership means long-term stability, MinIO is your counterexample.

Swift is not mentioned among the possible alternative by the author.

February 19, 2026 02:46 AM

February 16, 2026

As described previously,

the Linux kernel security team does not identify or mark or announce any sort

of security fixes that are made to the Linux kernel tree. So how, if the Linux

kernel were to become a CVE Numbering Authority (CNA) and responsible for

issuing CVEs, would the identification of security fixes happen in a way that

can be done by a volunteer staff? This post goes into the process of how

kernel fixes are currently automatically assigned to CVEs, and also the other

“out of band” ways a CVE can be issued for the Linux kernel project.

February 16, 2026 12:00 AM

February 13, 2026

This topic came up at kernel maintainers summit and some other groups have been playing around with it, particularly the BPF folks, and Chris Mason's work on kernel review prompts[1] for regressions. Red Hat have asked engineers to investigate some workflow enhancements with AI tooling, so I decided to let the vibecoding off the leash.

My main goal:

- Provide AI led patch review for drm patches

- Don't pollute the mailing list with them at least initially.

This led me to wanting to use lei/b4 tools, and public-inbox. If I could push the patches with message-ids and the review reply to a public-inbox I could just publish that and point people at it, and they could consume it using lei into their favorite mbox or browse it on the web.

I got claude to run with this idea, and it produced a project [2] that I've been refining for a couple of days.

I started with trying to use Chris' prompts, but screwed that up a bit due to sandboxing, but then I started iterating on using them and diverged.

The prompts are very directed at regression testing and single patch review, the patches get applied one-by-one to the tree, and the top patch gets the exhaustive regression testing. I realised I probably can't afford this, but it's also not exactly what I want.

I wanted a review of the overall series, but also a deeper per-patch review. I didn't really want to have to apply them to a tree, as drm patches are often difficult to figure out the base tree for them. I did want to give claude access to a drm-next tree so it could try apply patches, and if it worked it might increase the review, but if not it would fallback to just using the tree as a reference.

Some holes claude fell into, claude when run in batch mode has limits on turns it can take (opening patch files and opening kernel files for reference etc), giving it a large context can sometimes not leave it enough space to finish reviews on large patch series. It tried to inline patches into the prompt before I pointed out that would be bad, it tried to use the review instructions and open a lot of drm files, which ran out of turns. In the end I asked it to summarise the review prompts with some drm specific bits, and produce a working prompt. I'm sure there is plenty of tuning left to do with it.

Anyways I'm having my local claude run the poll loop every so often and processing new patches from the list. The results end up in the public-inbox[3], thanks to Benjamin Tissoires for setting up the git to public-inbox webhook.

I'd like for patch submitters to use this for some initial feedback, but it's also something that you should feel free to ignore, but I think if we find regressions in the reviews and they've been ignored, then I'll started suggesting it stronger. I don't expect reviewers to review it unless they want to. It was also suggested that perhaps I could fold in review replies as they happen into another review, and this might have some value, but I haven't written it yet. If on the initial review of a patch there is replies it will parse them, but won't do it later.

[1] https://github.com/masoncl/review-prompts

[2] https://gitlab.freedesktop.org/airlied/patch-reviewer

[3] https://lore.gitlab.freedesktop.org/drm-ai-reviews/

February 13, 2026 06:56 AM

February 06, 2026

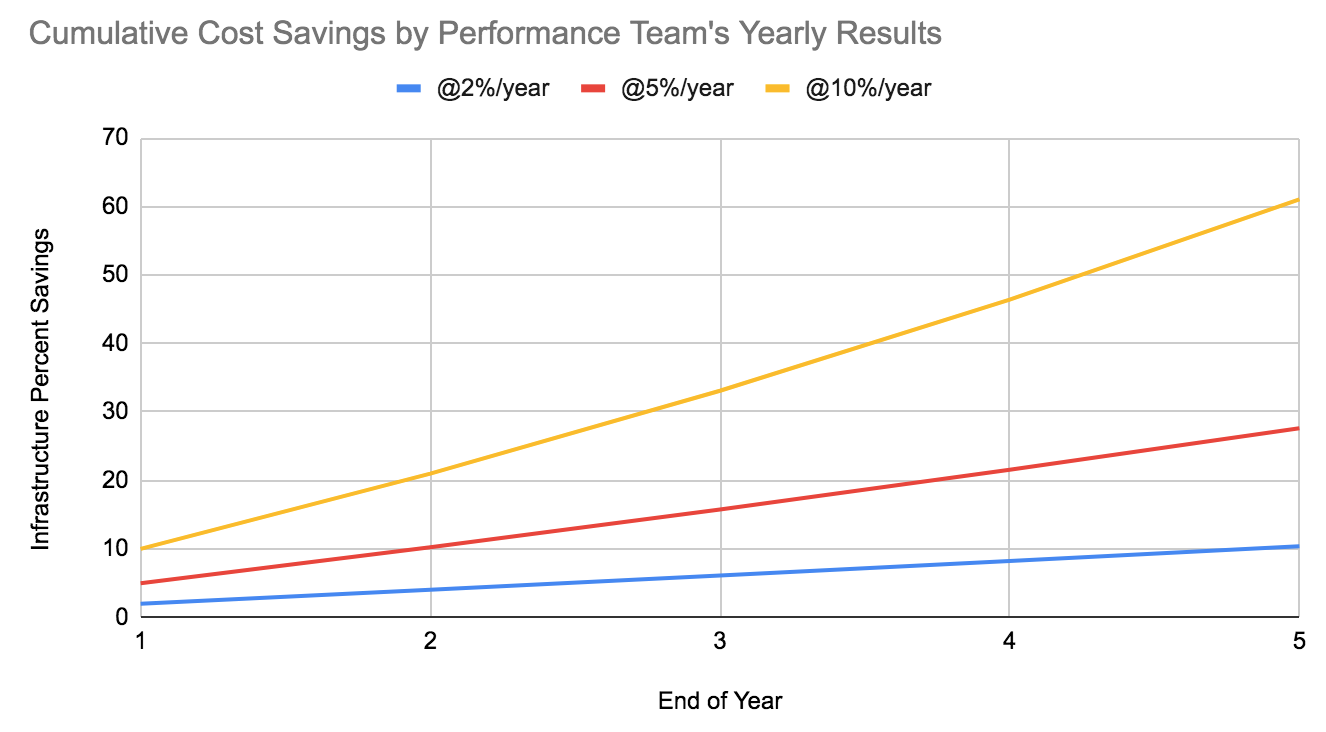

The staggering and fast-growing cost of AI datacenters is a call for performance engineering like no other in history; it's not just about saving costs – it's about saving the planet. I have joined OpenAI to work on this challenge directly, with an initial focus on ChatGPT performance. The scale is extreme and the growth is mind-boggling. As a leader in datacenter performance, I've realized that performance engineering as we know it may not be enough – I'm thinking of new engineering methods so that we can find bigger optimizations than we have before, and find them faster. It's the opportunity of a lifetime and, unlike in mature environments of scale, it feels as if there are no obstacles – no areas considered too difficult to change. Do anything, do it at scale, and do it today.

Why OpenAI exactly? I had talked to industry experts and friends who recommended several companies, especially OpenAI. However, I was still a bit cynical about AI adoption. Like everyone, I was being bombarded with ads by various companies to use AI, but I wondered: was anyone actually using it? Everyday people with everyday uses? One day during a busy period of interviewing, I realized I needed a haircut (as it happened, it was the day before I was due to speak with Sam Altman).

Mia the hairstylist got to work, and casually asked what I do for a living. "I'm an Intel fellow, I work on datacenter performance." Silence. Maybe she didn't know what datacenters were or who Intel was. I followed up: "I'm interviewing for a new job to work on AI datacenters." Mia lit up: "Oh, I use ChatGPT all the time!" While she was cutting my hair – which takes a while – she told me about her many uses of ChatGPT. (I, of course, was a captive audience.) She described uses I hadn't thought of, and I realized how ChatGPT was becoming an essential tool for everyone. Just one example: She was worried about a friend who was travelling in a far-away city, with little timezone overlap when they could chat, but she could talk to ChatGPT anytime about what the city was like and what tourist activities her friend might be doing, which helped her feel connected. She liked the memory feature too, saying it was like talking to a person who was living there.

I had previously chatted to other random people about AI, including a realtor, a tax accountant, and a part-time beekeeper. All told me enthusiastically about their uses of ChatGPT; the beekeeper, for example, uses it to help with small business paperwork. My wife was already a big user, and I was using it more and more, e.g. to sanity-check quotes from tradespeople. Now my hairstylist, who recognized ChatGPT as a brand more readily than she did Intel, was praising the technology and teaching me about it. I stood on the street after my haircut and let sink in how big this was, how this technology has become an essential aide for so many, how I could lead performance efforts and help save the planet. Joining OpenAI might be the biggest opportunity of my lifetime.

It's nice to work on something big that many people recognize and appreciate. I felt this when working at Netflix, and I'd been missing that human connection when I changed jobs. But there are other factors to consider beyond a well-known product: what's my role, who am I doing it with, and what is the compensation?

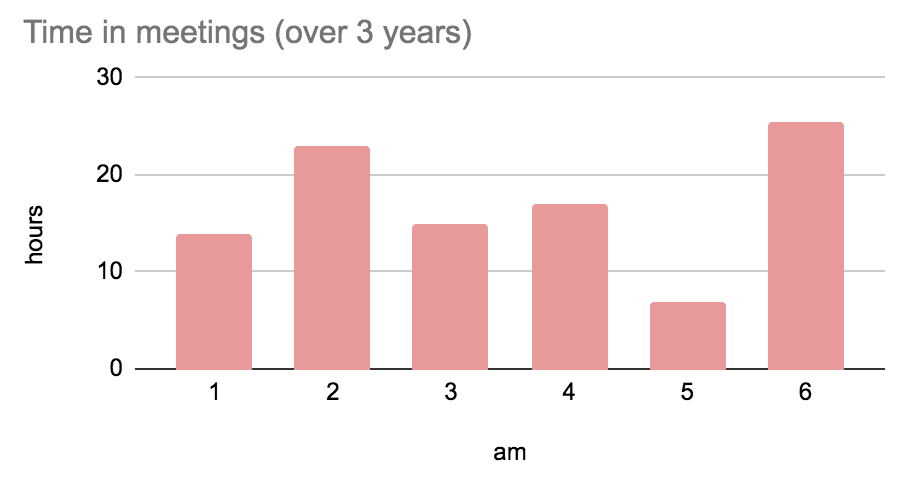

I ended up having 26 interviews and meetings (of course I kept a log) with various AI tech giants, so I learned a lot about the engineering work they are doing and the engineers who do it. The work itself reminds me of Netflix cloud engineering: huge scale, cloud computing challenges, fast-paced code changes, and freedom for engineers to make an impact. Lots of very interesting engineering problems across the stack. It's not just GPUs, it's everything.

The engineers I met were impressive: the AI giants have been very selective, to the point that I wasn't totally sure I'd pass the interviews myself. Of the companies I talked to, OpenAI had the largest number of talented engineers I already knew, including former Netflix colleagues such as Vadim who was encouraging me to join. At Netflix, Vadim would bring me performance issues and watch over my shoulder as I debugged and fixed them. It's a big plus to have someone at a company who knows you well, knows the work, and thinks you'll be good at the work.

Some people may be excited by what it means for OpenAI to hire me, a well known figure in computer performance, and of course I'd like to do great things. But to be fair on my fellow staff, there are many performance engineers already at OpenAI, including veterans I know from the industry, and they have been busy finding important wins. I'm not the first, I'm just the latest.

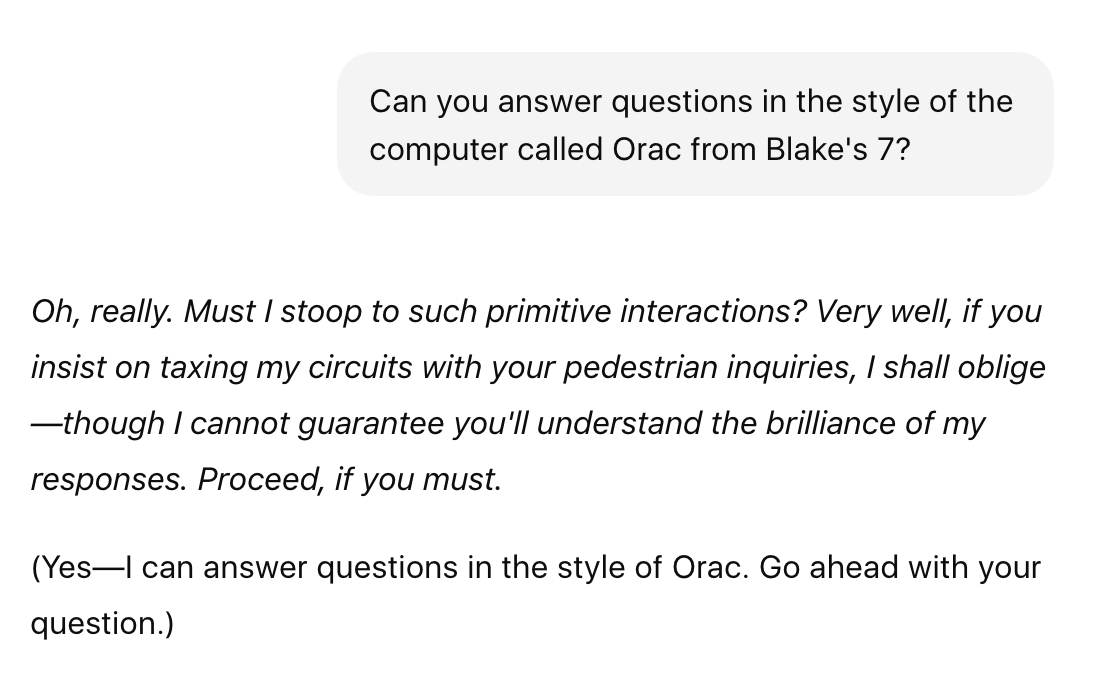

Building Orac

AI was also an early dream of mine. As a child I was a fan of British SciFi, including Blake's 7 (1978-1981) which featured a sarcastic, opinionated supercomputer named Orac. Characters could talk to Orac and ask it to do research tasks. Orac could communicate with all other computers in the universe, delegate work to them, and control them (this was very futuristic in 1978, pre-Internet as we know it).

Orac was considered the most valuable thing in the Blake's 7 universe, and by the time I was a university engineering student I wanted to build Orac. So I started developing my own natural language processing software. I didn't get very far, though: main memory at the time wasn't large enough to store an entire dictionary plus metadata. I visited a PC vendor with my requirements and they laughed, telling me to buy a mainframe instead. I realized I needed it to distinguish hot versus cold data and leave cold data on disk, and maybe I should be using a database… and that was about where I left that project.

Last year I started using ChatGPT, and wondered if it knew about Blake's 7 and Orac. So I asked:

ChatGPT's response nails the character. I added it to Settings->Personalization->Custom Instructions, and now it always answers as Orac. I love it. (There's also surprising news for Blake's 7 fans: A reboot was just announced!)

What's next for me

I am now a Member of Technical Staff for OpenAI, working remotely from Sydney, Australia, and reporting to Justin Becker. The team I've joined is ChatGPT performance engineering, and I'll be working with the other performance engineering teams at the company. One of my first projects is a multi-org strategy for improving performance and reducing costs.

There's so many interesting things to work on, things I have done before and things I haven't. I'm already using Codex for more than just coding. Will I be doing more eBPF, Ftrace, PMCs? I'm starting with OpenAI's needs and seeing where that takes me; but given those technologies are proven for finding datacenter performance wins, it seems likely -- I can lead the way. (And if everything I've described here sounds interesting to you, OpenAI is hiring.)

I was at Linux Plumber's Conference in Toyko in December, just after I announced leaving Intel, and dozens of people wanted to know where I was going next and why. I thought I'd write this blog post to answer everyone at once. I also need to finish part 2 of hiring a performance engineering team (it was already drafted before I joined OpenAI). I haven't forgotten.

It took months to wrap up my prior job and start at OpenAI, so I was due for another haircut. I thought it'd be neat to ask Mia about ChatGPT now that I work on it, then realized it had been months and she could have changed her mind. I asked nervously: "Still using ChatGPT?". Mia responded confidently: "twenty-four seven!"

I checked with Mia, she was thrilled to be mentioned in my post. This is also a personal post: no one asked me to write this.

February 06, 2026 01:00 PM

February 04, 2026

Just to keep up some blogging content, I'll do where did I spend/waste time last couple of weeks.

I was working on two nouveau kernel bugs in parallel (in between whatever else I was doing).

Bug 1: Lyude, 2 or 3 weeks ago identified the RTX6000 Ada GPU wasn't resuming from suspend. I plugged in my one and indeed it wasn't. Turned out since we moved to 570 firmware, this has been broken. We started digging down various holes on what changed, sent NVIDIA debug traces to decode for us. NVIDIA identified that suspend was actually failing but the result wasn't getting propogated up. At least the opengpu driver was working properly.

I started writing patches for all the various differences between nouveau and opengpu in terms of what we send to the firmware, but none of them were making a difference.

I took a tangent, and decided to try and drop the latest 570.207 firmware into place instead of 570.144. NVIDIA have made attempts to keep the firmware in one stream more ABI stable. 570.207 failed to suspend, but for a different reason.

It turns out GSP RPC messages have two levels of sequence numbering, one on the command queue, and one on the RPC. We weren't filling in the RPC one, and somewhere in the later 570's someone found a reason to care. Now it turned out whenever we boot on 570 firmware we get a bunch of async msgs from GSP, with the word ASSERT in them with no additional info. Looks like at least some of those messages were due to our missing sequence numbers and fixing that stopped those.

And then? still didn't suspend/resume. Dug into memory allocations, framebuffer suspend/resume allocations. Until Milos on discord said you did confirm the INTERNAL_FBSR_INIT packet is the same, and indeed it wasn't. There is a flag bEnteringGCOff, which you set if you are entering into graphics off suspend state, however for normal suspend/resume instead of runtime suspend/resume, we shouldn't tell the firmware we are going to gcoff for some reason. Fixing that fixed suspend/resume.

While I was head down on fixing this, the bug trickled up into a few other places and I had complaints from a laptop vendor and RH internal QA all lined up when I found the fix. The fix is now in drm-misc-fixes.

Bug 2: A while ago Mary, a nouveau developer, enabled larger pages support in the kernel/mesa for nouveau/nvk. This enables a number of cool things like compression and gives good speedups for games. However Mel, another nvk developer reported random page faults running Vulkan CTS with large pages enabled. Mary produced a workaround which would have violated some locking rules, but showed that there was some race in the page table reference counting.

NVIDIA GPUs post pascal, have a concept of a dual page table. At the 64k level you can have two tables, one with 64K entries, and one with 4K entries, and the addresses of both are put in the page directory. The hardware then uses the state of entries in the 64k pages to decide what to do with the 4k entries. nouveau creates these 4k/64k tables dynamically and reference counts them. However the nouveau code was written pre VMBIND, and fully expected the operation ordering to be reference/map/unmap/unreference, and we would always do a complete cycle on 4k before moving to 64k and vice versa. However VMBIND means we delay unrefs to a safe place, which might be after refs happen. Fun things like ref 4k, map 4k, unmap 4k, ref 64k, map 64k, unref 4k, unmap 64k, unref 64k can happen, and the code just wasn't ready to handle those. Unref on 4k would sometimes overwrite the entry in the 64k table to invalid, even when it was valid. This took a lot of thought and 5 or 6 iterations on ideas before we stopped seeing fails. In the end the main things were to reference count the 4k/64k ref/unref separately, but also the last thing to do a map operation owned the 64k entry, which should conform to how userspace uses this interface.

The fixes for this are now in drm-misc-next-fixes.

Thanks to everyone who helped, Lyude/Milos on the suspend/resume, Mary/Mel on the page tables.

February 04, 2026 09:04 PM

January 22, 2026

I really like the idea of using biometrics to add extra security, but have always hated the idea that simply touching the fingerprint sensor would unlock your entire phone, so in my version of LineageOS the touch to unlock feature is disabled but I still use second factor biometrics for the security of various apps. Effectively the android unlock policy is Fingerprint OR PIN/Pattern/Password and I simply want that OR to become an AND.

The problem

The idea of using two factor authentication (2FA) was pioneered by GrapheneOS but since I like the smallness of the Pixel 3 that’s not available to me (plus it only seems to work with pin and fingerprint and my preferred unlock is pattern). However, since I build my own LineageOS anyway (so I can sign and secure boot it) I thought I’d look into adding the feature … porting from GrapheneOS should be easy, right? In fact, when looking in the GrapheneOS code for frameworks/base, there are about nine commits adding the feature:

a7a19bf8fb98 add second factor to fingerprint unlock

5dd0e04f82cd add second factor UI

9cc17fd97296 add second factor to FingerprintService

c92a23473f3f add second factor to LockPattern classes

c504b05c933a add second factor to TrustManagerService

0aa7b9ec8408 add second factor to AdaptiveAuthService

62bbdf359687 add second factor to LockSettingsStateListener

7429cc13f971 add second factor to LockSettingsService

6e2d499a37a2 add second factor to DevicePolicyManagerService

And a diffstat of over 3,000 lines … which seems a bit much for changing an OR to an AND. Of course, the reason it’s so huge is because they didn’t change the OR, they implemented an entirely new bouncer (bouncer being the android term in the code for authorisation gateway) that did pin and fingerprint in addition to the other three bouncers doing pattern, pin and password. So not only would I have to port 3,000 lines of code, but if I want a bouncer doing fingerprint and pattern, I’d have to write it. I mean colour me lazy but that seems way too much work for such an apparently simple change.

Creating a new 2FA unlock

So is it actually easy? The rest of this post documents my quest to find out. Android code itself isn’t always easy to read: being Java it’s object oriented, but the curse of object orientation is that immediately after you’ve written the code, you realise you got the object model wrong and it needs to be refactored … then you realise the same thing after the first refactor and so on until you either go insane or give up. Even worse when many people write the code they all end up with slightly different views of what the object model should be. The result is what you see in Android today: model inconsistency and redundancy which get in the way when you try to understand the code flow simply by reading it. One stroke of luck was that there is actually only a single method all of the unlock types other than fingerprint go through KeyguardSecurityContainerController.showNextSecurityScreenOrFinish() with fingerprint unlocking going via a listener to the KeyguardUpdateMonitorCallback.onBiometricAuthenticated(). And, thanks to already disabling fingerprint only unlock, I know that if I simply stop triggering the latter event, it’s enough to disable fingerprint only unlock and all remaining authentication goes through the former callback. So to implement the required AND function, I just have to do this and check that a fingerprint authentication is also present in showNext.. (handily signalled by KeyguardUpdateMonitor.userUnlockedWithBiometric()). The latter being set fairly late in the sequence that does the onBiometricAuthenticated() callback (so I have to cut it off after this to prevent fingerprint only unlock). As part of the Android redundancy, there’s already a check for fingerprint unlock as its own segment of a big if/else statement in the showNext.. code; it’s probably a vestige from a different fingerprint unlock mechanism but I disabled it when the user enables 2FA just in case. There’s also an insanely complex set of listeners for updating the messages on the lockscreen to guide the user through unlocking, which I decided not to change (if you enable 2FA, you need to know how to use it). Finally, I diverted the code that would call the onBiometricAuthenticated() and instead routed it to onBiometricDetected() which triggers the LockScreen bouncer to pop up, so now you wake your phone, touch the fingerprint to the back, when authenticated, it pops up the bouncer and you enter your pin/pattern/password … neat (and simple)!

Well, not so fast. While the code above works perfectly if the lockscreen is listening for fingerprints, there are two cases where it doesn’t: if the phone is in lockdown or on first boot (because the Android way of not allowing fingerprint only authentication for those cases is not to listen for it). At this stage, my test phone is actually unusable because I can never supply the required fingerprint for 2FA unlocking. Fortunately a rooted adb can update the 2FA in the secure settings service: simply run sqlite3 on /data/system/locksettings.db and flip user_2fa from 1 to 0.

The fingerprint listener is started in KeyguardUpdateMonitor, but it has a fairly huge set of conditions in updateFingerprintListeningState() which is also overloaded by doing detection as well as authentication. In the end it’s not as difficult as it looks: shouldListenForFingerprint needs to be true and runDetect needs to be false. However, even then it doesn’t actually work (although debugging confirms it’s trying to start the fingerprint listening service); after a lot more debugging it turns out that the biometric server process, which runs fingerprint detection and authentication, also has a redundant check for whether the phone is encrypted or in lockdown and refuses to start if it is, which also now needs to return false for 2FA and bingo, it works in all circumstances.

Conclusion

The final diffstat for all of this is

5 files changed, 55 insertions(+), 3 deletions(-)

So I’d say that is way simpler than the GrapheneOS one. All that remains is to add a switch for the setting (under the fingerprint settings) in packages/apps/Settings and it’s done. If you’re brave enough to try this for yourself you can go to my github account and get both the frameworks and settings commits (if you don’t want fingerprint unlock disable when 2FA isn’t selected, you’ll have to remove the head commit in frameworks). I suppose I should also add I’ve up-ported all of my other security stuff and am now on Android-15 (LineageOS-22.2).

January 22, 2026 05:17 PM

January 04, 2026

Great, I am unable to comment at BG.

Theoretically, I have a spare place at Meenuvia, but that platform is also in decline. The owner, Pixy, has no time even to fix the slug problem that cropped up a few months ago (how do you regress a platform that was stable for 20 years, I don't know).

Most likely, I'll give up on blogging entirely, and move to Twitter or Fediverse.

January 04, 2026 08:32 PM

Wikipedia says “An IBM PC compatible is any personal computer that is hardware- and software-compatible with the IBM Personal Computer (IBM PC) and its subsequent models”. But what does this actually mean? The obvious literal interpretation is for a device to be PC compatible, all software originally written for the IBM 5150 must run on it. Is this a reasonable definition? Is it one that any modern hardware can meet?

Before we dig into that, let’s go back to the early days of the x86 industry. IBM had launched the PC built almost entirely around off-the-shelf Intel components, and shipped full schematics in the IBM PC Technical Reference Manual. Anyone could buy the same parts from Intel and build a compatible board. They’d still need an operating system, but Microsoft was happy to sell MS-DOS to anyone who’d turn up with money. The only thing stopping people from cloning the entire board was the BIOS, the component that sat between the raw hardware and much of the software running on it. The concept of a BIOS originated in CP/M, an operating system originally written in the 70s for systems based on the Intel 8080. At that point in time there was no meaningful standardisation - systems might use the same CPU but otherwise have entirely different hardware, and any software that made assumptions about the underlying hardware wouldn’t run elsewhere. CP/M’s BIOS was effectively an abstraction layer, a set of code that could be modified to suit the specific underlying hardware without needing to modify the rest of the OS. As long as applications only called BIOS functions, they didn’t need to care about the underlying hardware and would run on all systems that had a working CP/M port.

By 1979, boards based on the 8086, Intel’s successor to the 8080, were hitting the market. The 8086 wasn’t machine code compatible with the 8080, but 8080 assembly code could be assembled to 8086 instructions to simplify porting old code. Despite this, the 8086 version of CP/M was taking some time to appear, and a company called Seattle Computer Products started producing a new OS closely modelled on CP/M and using the same BIOS abstraction layer concept. When IBM started looking for an OS for their upcoming 8088 (an 8086 with an 8-bit data bus rather than a 16-bit one) based PC, a complicated chain of events resulted in Microsoft paying a one-off fee to Seattle Computer Products, porting their OS to IBM’s hardware, and the rest is history.

But one key part of this was that despite what was now MS-DOS existing only to support IBM’s hardware, the BIOS abstraction remained, and the BIOS was owned by the hardware vendor - in this case, IBM. One key difference, though, was that while CP/M systems typically included the BIOS on boot media, IBM integrated it into ROM. This meant that MS-DOS floppies didn’t include all the code needed to run on a PC - you needed IBM’s BIOS. To begin with this wasn’t obviously a problem in the US market since, in a way that seems extremely odd from where we are now in history, it wasn’t clear that machine code was actually copyrightable. In 1982 Williams v. Artic determined that it could be even if fixed in ROM - this ended up having broader industry impact in Apple v. Franklin and it became clear that clone machines making use of the original vendor’s ROM code wasn’t going to fly. Anyone wanting to make hardware compatible with the PC was going to have to find another way.

And here’s where things diverge somewhat. Compaq famously performed clean-room reverse engineering of the IBM BIOS to produce a functionally equivalent implementation without violating copyright. Other vendors, well, were less fastidious - they came up with BIOS implementations that either implemented a subset of IBM’s functionality, or didn’t implement all the same behavioural quirks, and compatibility was restricted. In this era several vendors shipped customised versions of MS-DOS that supported different hardware (which you’d think wouldn’t be necessary given that’s what the BIOS was for, but still), and the set of PC software that would run on their hardware varied wildly. This was the era where vendors even shipped systems based on the Intel 80186, an improved 8086 that was both faster than the 8086 at the same clock speed and was also available at higher clock speeds. Clone vendors saw an opportunity to ship hardware that outperformed the PC, and some of them went for it.

You’d think that IBM would have immediately jumped on this as well, but no - the 80186 integrated many components that were separate chips on 8086 (and 8088) based platforms, but crucially didn’t maintain compatibility. As long as everything went via the BIOS this shouldn’t have mattered, but there were many cases where going via the BIOS introduced performance overhead or simply didn’t offer the functionality that people wanted, and since this was the era of single-user operating systems with no memory protection, there was nothing stopping developers from just hitting the hardware directly to get what they wanted. Changing the underlying hardware would break them.

And that’s what happened. IBM was the biggest player, so people targeted IBM’s platform. When BIOS interfaces weren’t sufficient they hit the hardware directly - and even if they weren’t doing that, they’d end up depending on behavioural quirks of IBM’s BIOS implementation. The market for DOS-compatible but not PC-compatible mostly vanished, although there were notable exceptions - in Japan the PC-98 platform achieved significant success, largely as a result of the Japanese market being pretty distinct from the rest of the world at that point in time, but also because it actually handled Japanese at a point where the PC platform was basically restricted to ASCII or minor variants thereof.

So, things remained fairly stable for some time. Underlying hardware changed - the 80286 introduced the ability to access more than a megabyte of address space and would promptly have broken a bunch of things except IBM came up with an utterly terrifying hack that bit me back in 2009, and which ended up sufficiently codified into Intel design that it was one mechanism for breaking the original XBox security. The first 286 PC even introduced a new keyboard controller that supported better keyboards but which remained backwards compatible with the original PC to avoid breaking software. Even when IBM launched the PS/2, the first significant rearchitecture of the PC platform with a brand new expansion bus and associated patents to prevent people cloning it without paying off IBM, they made sure that all the hardware was backwards compatible.

For decades, PC compatibility meant not only supporting the officially supported interfaces, it meant supporting the underlying hardware. This is what made it possible to ship install media that was expected to work on any PC, even if you’d need some additional media for hardware-specific drivers. It’s something that still distinguishes the PC market from the ARM desktop market. But it’s not as true as it used to be, and it’s interesting to think about whether it ever was as true as people thought.

Let’s take an extreme case. If I buy a modern laptop, can I run 1981-era DOS on it? The answer is clearly no. First, modern systems largely don’t implement the legacy BIOS. The entire abstraction layer that DOS relies on isn’t there, having been replaced with UEFI. When UEFI first appeared it generally shipped with a Compatibility Services Module, a layer that would translate BIOS interrupts into UEFI calls, allowing vendors to ship hardware with more modern firmware and drivers without having to duplicate them to support older operating systems. Is this system PC compatible? By the strictest of definitions, no.

Ok. But the hardware is broadly the same, right? There’s projects like CSMWrap that allow a CSM to be implemented on top of stock UEFI, so everything that hits BIOS should work just fine. And well yes, assuming they implement the BIOS interfaces fully, anything using the BIOS interfaces will be happy. But what about stuff that doesn’t? Old software is going to expect that my Sound Blaster is going to be on a limited set of IRQs and is going to assume that it’s going to be able to install its own interrupt handler and ACK those on the interrupt controller itself and that’s really not going to work when you have a PCI card that’s been mapped onto some APIC vector, and also if your keyboard is attached via USB or SPI then reading it via the CSM will work (because it’s calling into UEFI to get the actual data) but trying to read the keyboard controller directly won’t, so you’re still actually relying on the firmware to do the right thing but it’s not, because the average person who wants to run DOS on a modern computer owns three fursuits and some knee length socks and while you are important and vital and I love you all you’re not enough to actually convince a transglobal megacorp to flip the bit in the chipset that makes all this old stuff work.

But imagine you are, or imagine you’re the sort of person who (like me) thinks writing their own firmware for their weird Chinese Thinkpad knockoff motherboard is a good and sensible use of their time - can you make this work fully? Haha no of course not. Yes, you can probably make sure that the PCI Sound Blaster that’s plugged into a Thunderbolt dock has interrupt routing to something that is absolutely no longer an 8259 but is pretending to be so you can just handle IRQ 5 yourself, and you can probably still even write some SMM code that will make your keyboard work, but what about the corner cases? What if you’re trying to run something built with IBM Pascal 1.0? There’s a risk that it’ll assume that trying to access an address just over 1MB will give it the data stored just above 0, and now it’ll break. It’d work fine on an actual PC, and it won’t work here, so are we PC compatible?

That’s a very interesting abstract question and I’m going to entirely ignore it. Let’s talk about PC graphics. The original PC shipped with two different optional graphics cards - the Monochrome Display Adapter and the Color Graphics Adapter. If you wanted to run games you were doing it on CGA, because MDA had no mechanism to address individual pixels so you could only render full characters. So, even on the original PC, there was software that would run on some hardware but not on other hardware.

Things got worse from there. CGA was, to put it mildly, shit. Even IBM knew this - in 1984 they launched the PCjr, intended to make the PC platform more attractive to home users. As well as maybe the worst keyboard ever to be associated with the IBM brand, IBM added some new video modes that allowed displaying more than 4 colours on screen at once, and software that depended on that wouldn’t display correctly on an original PC. Of course, because the PCjr was a complete commercial failure, it wouldn’t display correctly on any future PCs either. This is going to become a theme.

There’s never been a properly specified PC graphics platform. BIOS support for advanced graphics modes ended up specified by VESA rather than IBM, and even then getting good performance involved hitting hardware directly. It wasn’t until Microsoft specced DirectX that anything was broadly usable even if you limited yourself to Microsoft platforms, and this was an OS-level API rather than a hardware one. If you stick to BIOS interfaces then CGA-era code will work fine on graphics hardware produced up until the 20-teens, but if you were trying to hit CGA hardware registers directly then you’re going to have a bad time. This isn’t even a new thing - even if we restrict ourselves to the authentic IBM PC range (and ignore the PCjr), by the time we get to the Enhanced Graphics Adapter we’re not entirely CGA compatible. Is an IBM PC/AT with EGA PC compatible? You’d likely say “yes”, but there’s software written for the original PC that won’t work there.

And, well, let’s go even more basic. The original PC had a well defined CPU frequency and a well defined CPU that would take a well defined number of cycles to execute any given instruction. People could write software that depended on that. When CPUs got faster, some software broke. This resulted in systems with a Turbo Button - a button that would drop the clock rate to something approximating the original PC so stuff would stop breaking. It’s fine, we’d later end up with Windows crashing on fast machines because hardware details will absolutely bleed through.

So, what’s a PC compatible? No modern PC will run the DOS that the original PC ran. If you try hard enough you can get it into a state where it’ll run most old software, as long as it doesn’t have assumptions about memory segmentation or your CPU or want to talk to your GPU directly. And even then it’ll potentially be unusable or crash because time is hard.

The truth is that there’s no way we can technically describe a PC Compatible now - or, honestly, ever. If you sent a modern PC back to 1981 the media would be amazed and also point out that it didn’t run Flight Simulator. “PC Compatible” is a socially defined construct, just like “Woman”. We can get hung up on the details or we can just chill.

January 04, 2026 03:11 AM

January 02, 2026

Lots of the CVE world seems to focus on “security bugs” but I’ve found that it

is not all that well known exactly how the Linux kernel security process works.

I gave a

talk about this back in 2023

and at other conferences since then, attempting to explain how it works, but I

also thought it would be good to explain this all in writing as it is required

to know this when trying to understand how the Linux kernel CNA issues CVEs.

January 02, 2026 12:00 AM

December 16, 2025

We are glad to announce that video recordings of the talks are available on our YouTube channel.

You can use the complete conference playlist or look for “video” links in each contribution in the schedule

December 16, 2025 05:35 AM

December 15, 2025

With all of the different Linux kernel stable releases happening (at least 1

stable branch and multiple longterm branches are active at any one point in

time), keeping track of what commits are already applied to what branch, and

what branch specific fixes should be applied to, can quickly get to be a very

complex task if you attempt to do this manually. So I’ve created some tools to

help make my life easier when doing the stable kernel maintenance work, which

ended up making the work of tracking CVEs much simpler to manage in an

automated way.

December 15, 2025 12:00 AM

December 14, 2025

I presented an overview of the Ultraviolet Linux (UV) project at Linux Plumbers Conference (LPC) 2025.

UV is a proposed architecture and reference implementation for generalized code integrity in Linux. The goal of the presentation was to seek early feedback from the community and to invite collaboration — it’s at an early stage of development currently.

A copy of the slides may be found here (pdf).

December 14, 2025 03:38 AM

December 09, 2025

Despite having a stable release model and cadence since December 2003, Linux

kernel version numbers seem to baffle and confuse those that run across them,

causing numerous groups to mistakenly make versioning statements that are flat

out false. So let’s go into how this all works in detail.

December 09, 2025 12:00 AM

December 08, 2025

It’s been almost 2 full years since Linux became a CNA (Certificate Numbering

Authority) which

meant that we (i.e. the kernel.org community) are now responsible for issuing

all CVEs for the Linux kernel. During this time, we’ve become one of the

largest creators of CVEs by quantity, going from nothing to number 3 in 2024 to

number 1 in 2025. Naturally, this has caused some questions about how we are

both doing all of this work, and how people can keep track of it.

December 08, 2025 12:00 AM

December 04, 2025

InnovatiON 2022

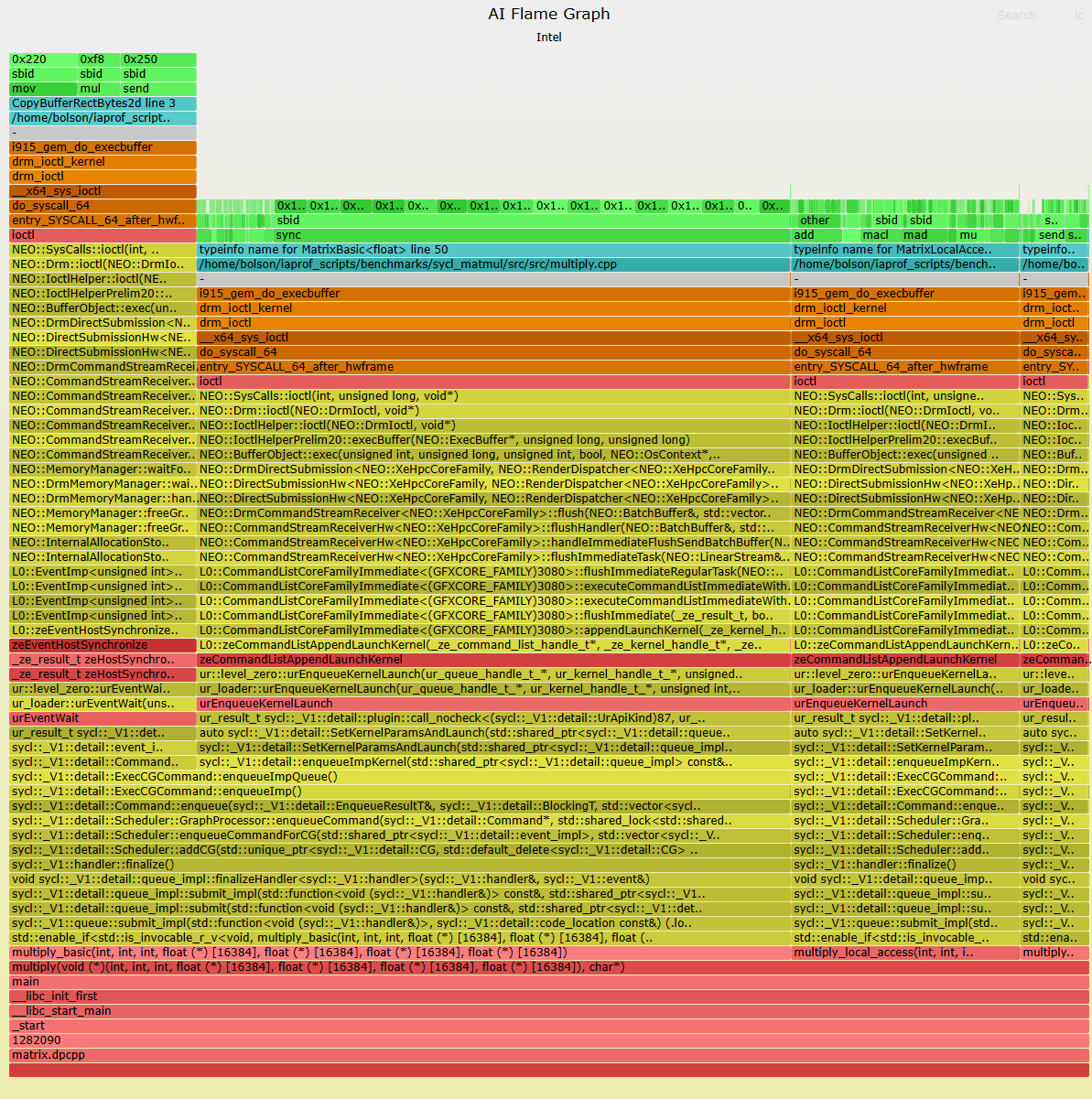

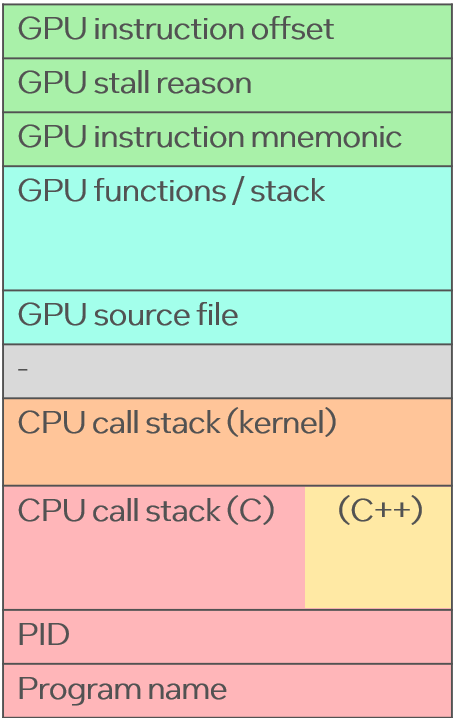

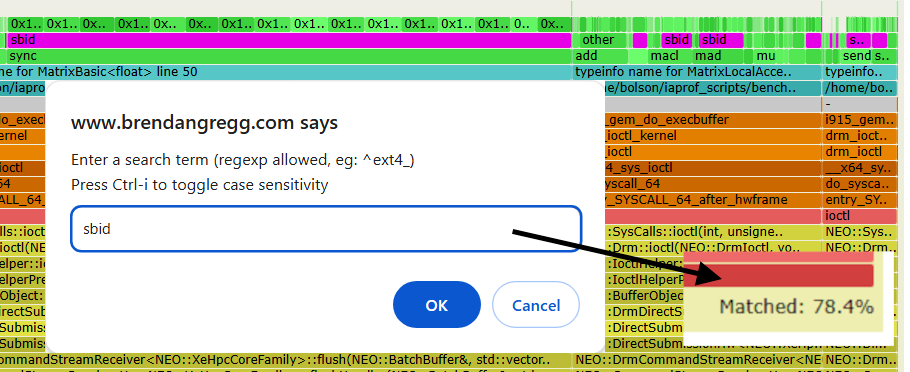

AI Flame Graphs

GPU Flame Scope

Harshad Sane

SREcon APAC

Cloud strategy

Last day

I've resigned from Intel and accepted a new opportunity. If you are an Intel employee, you might have seen my fairly long email that summarized what I did in my 3.5 years. Much of this is public:

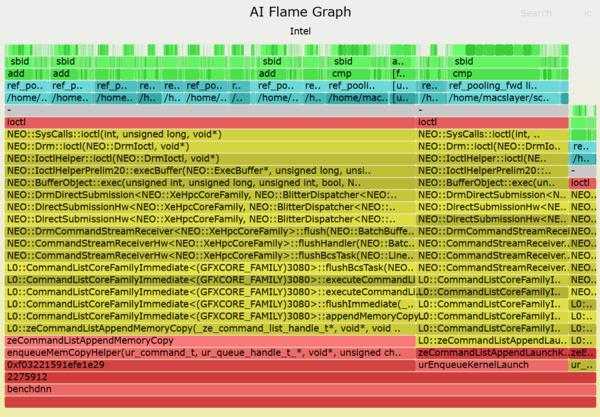

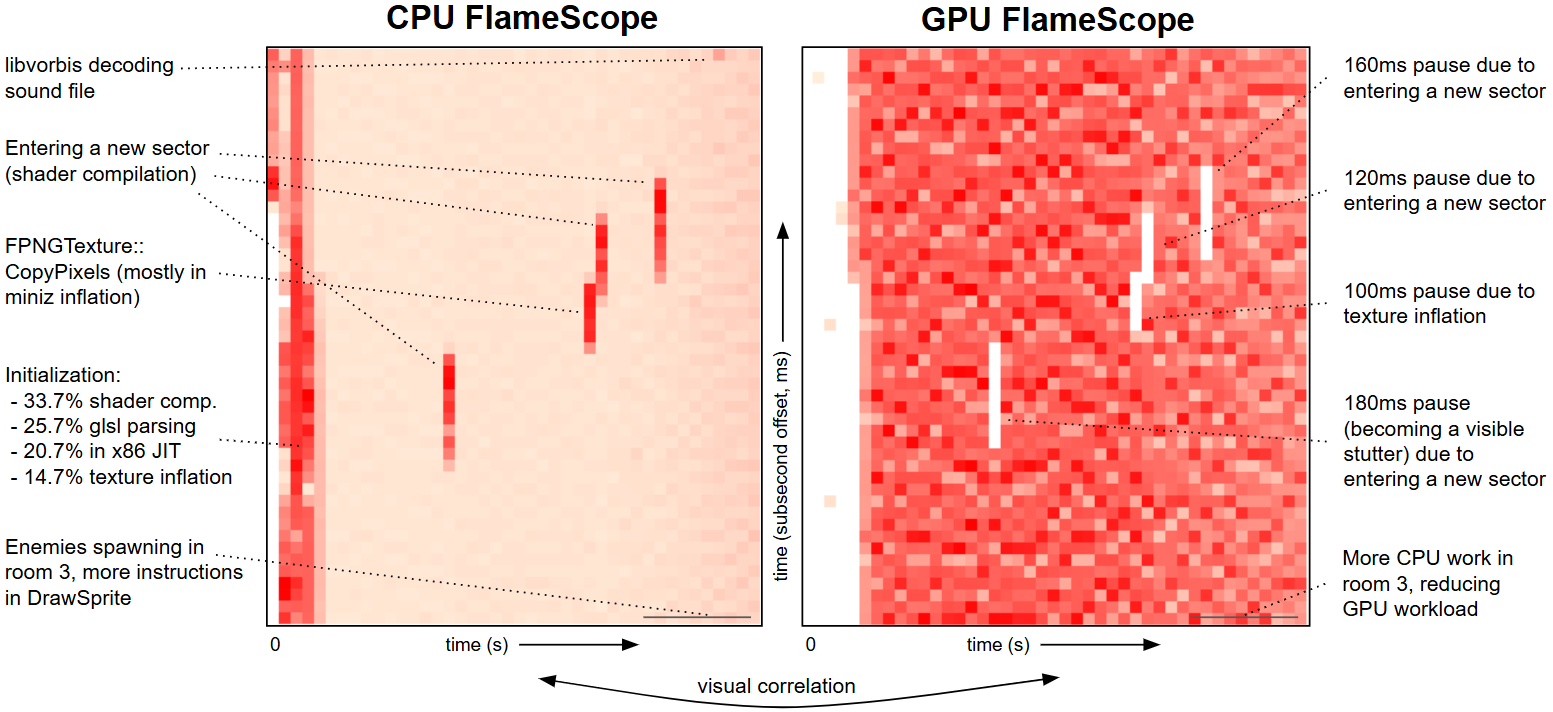

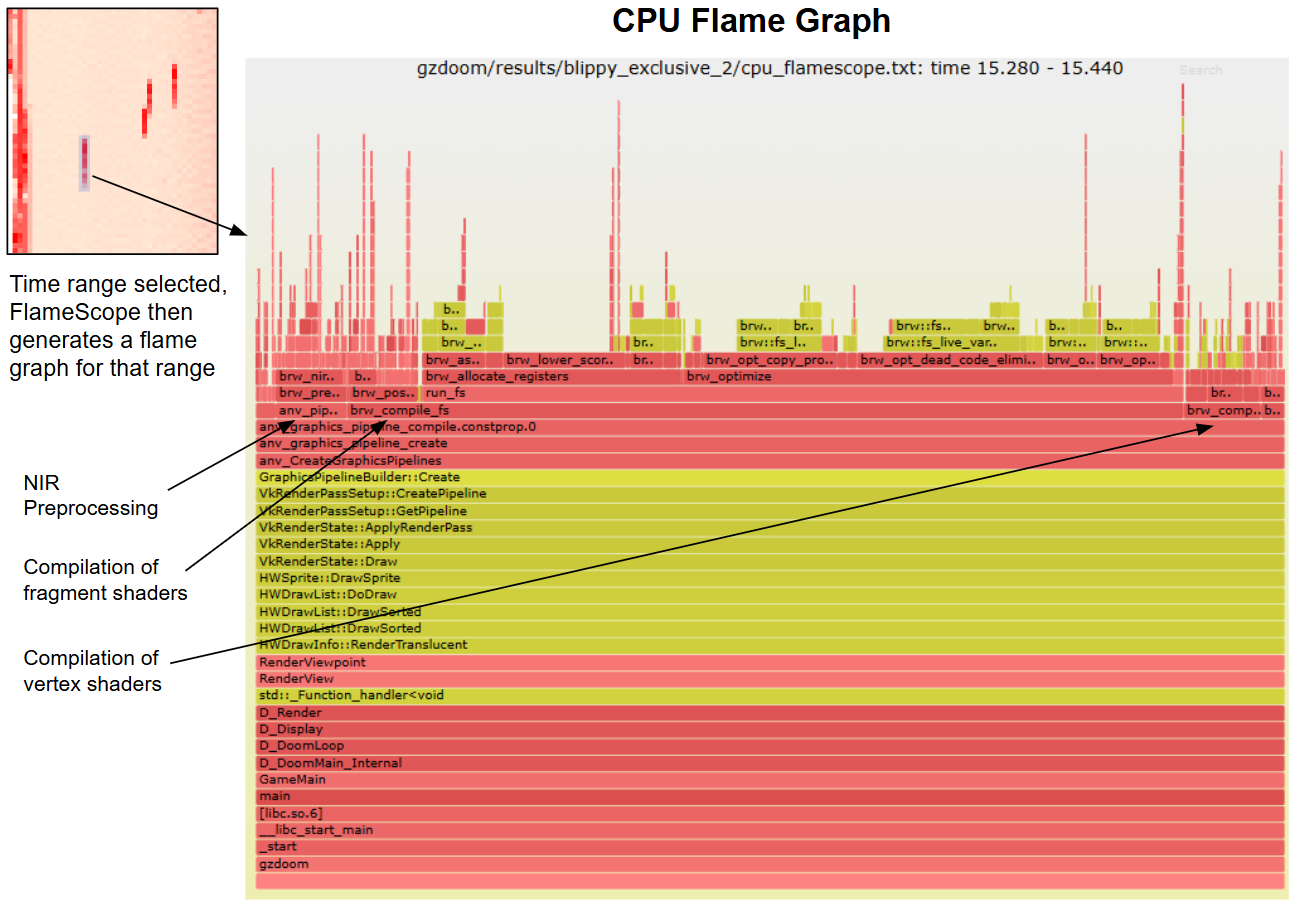

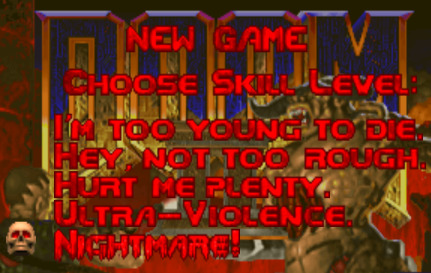

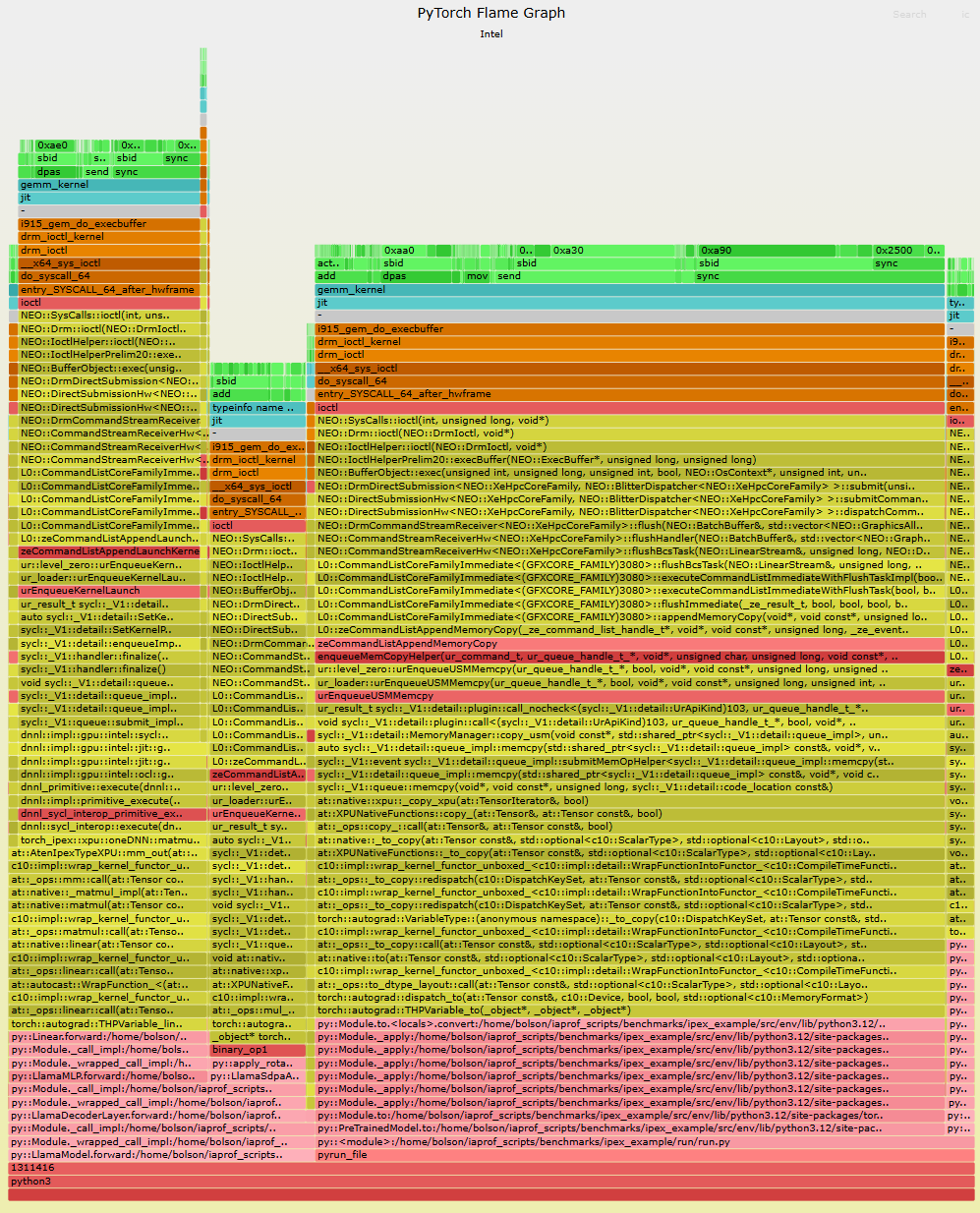

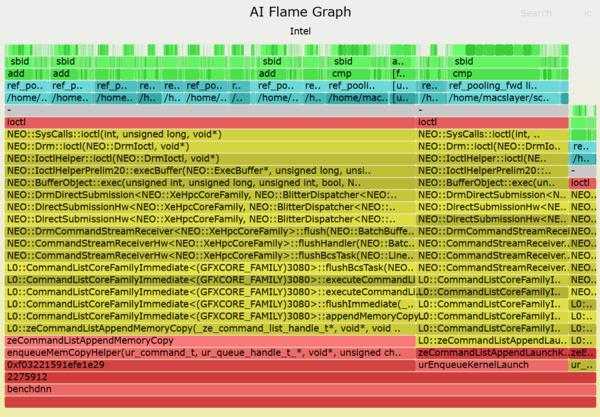

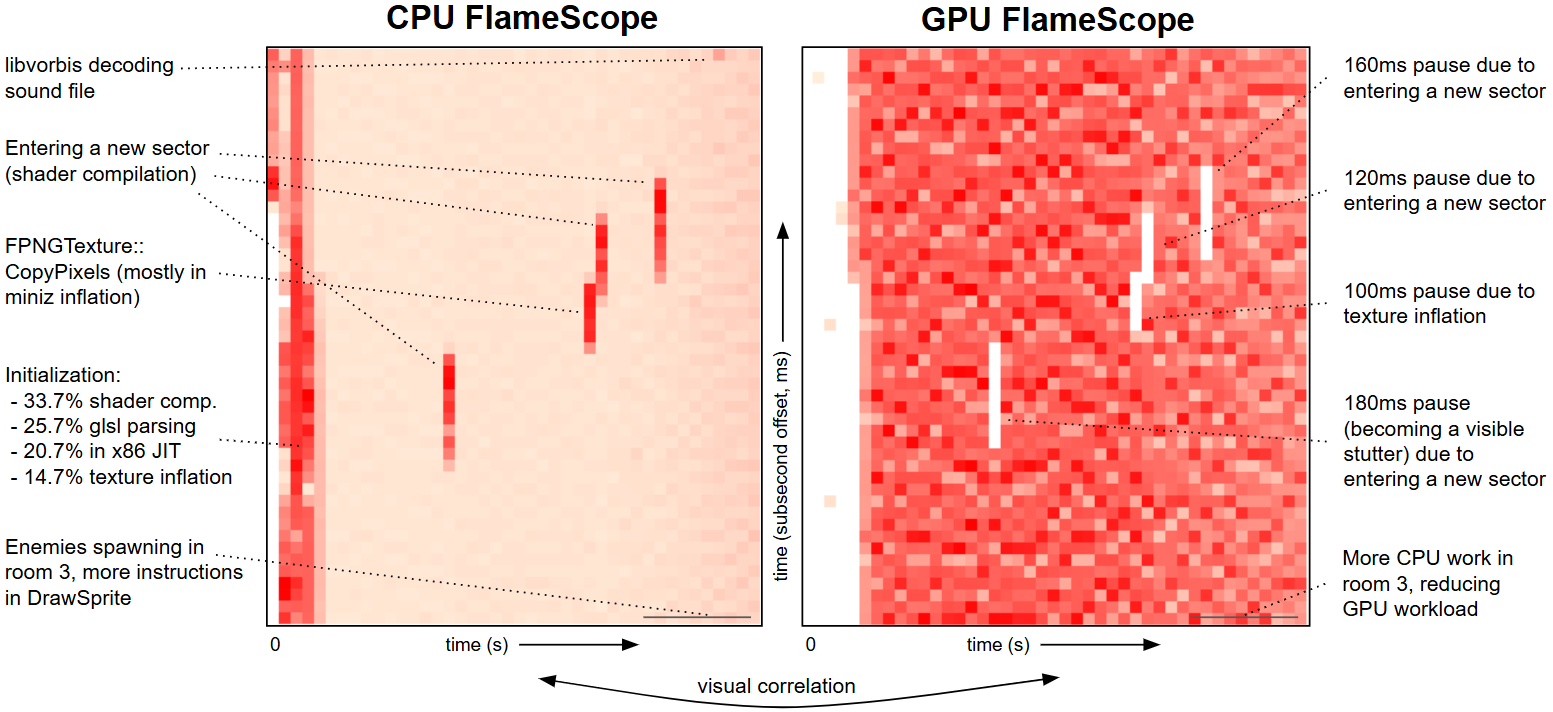

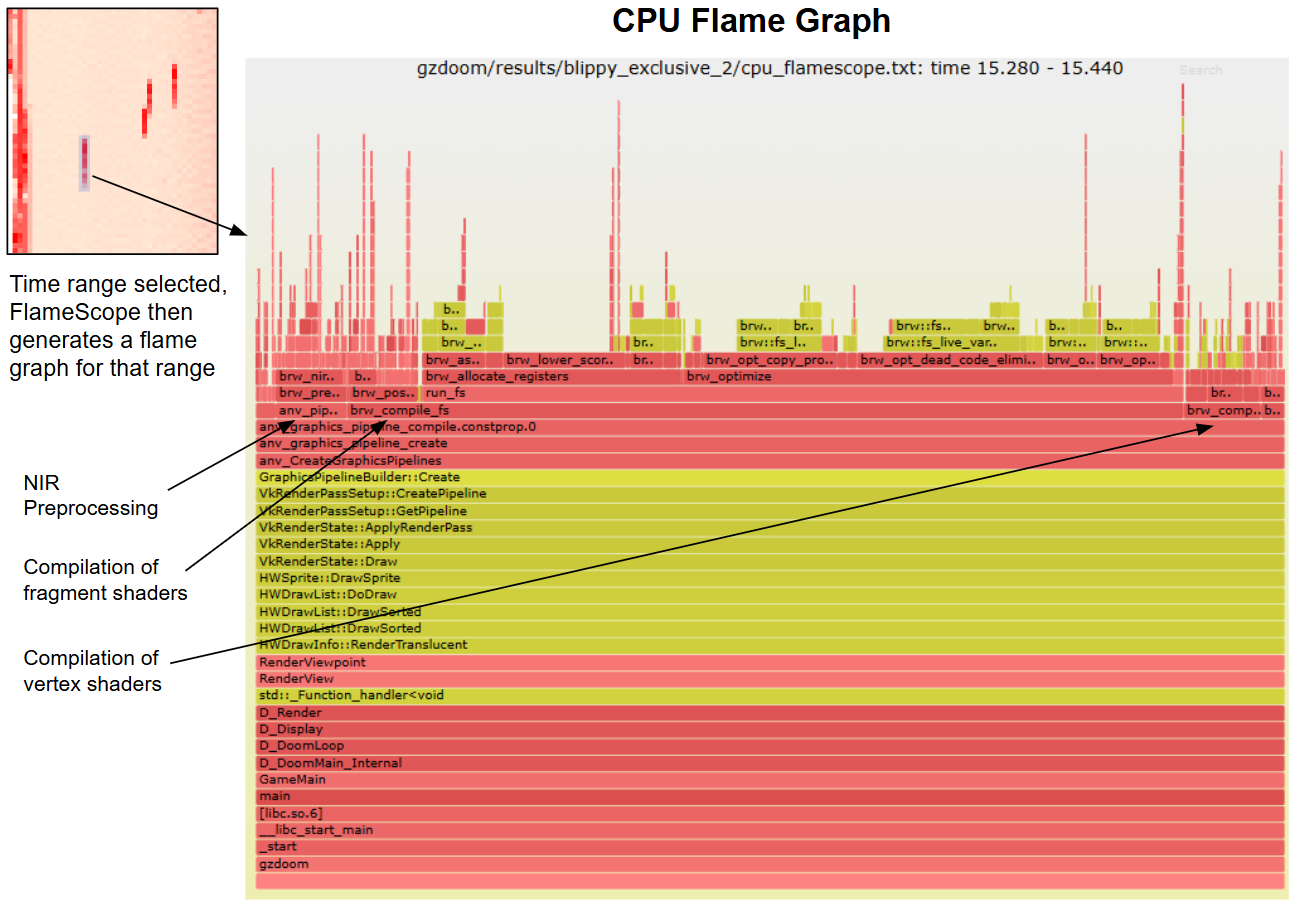

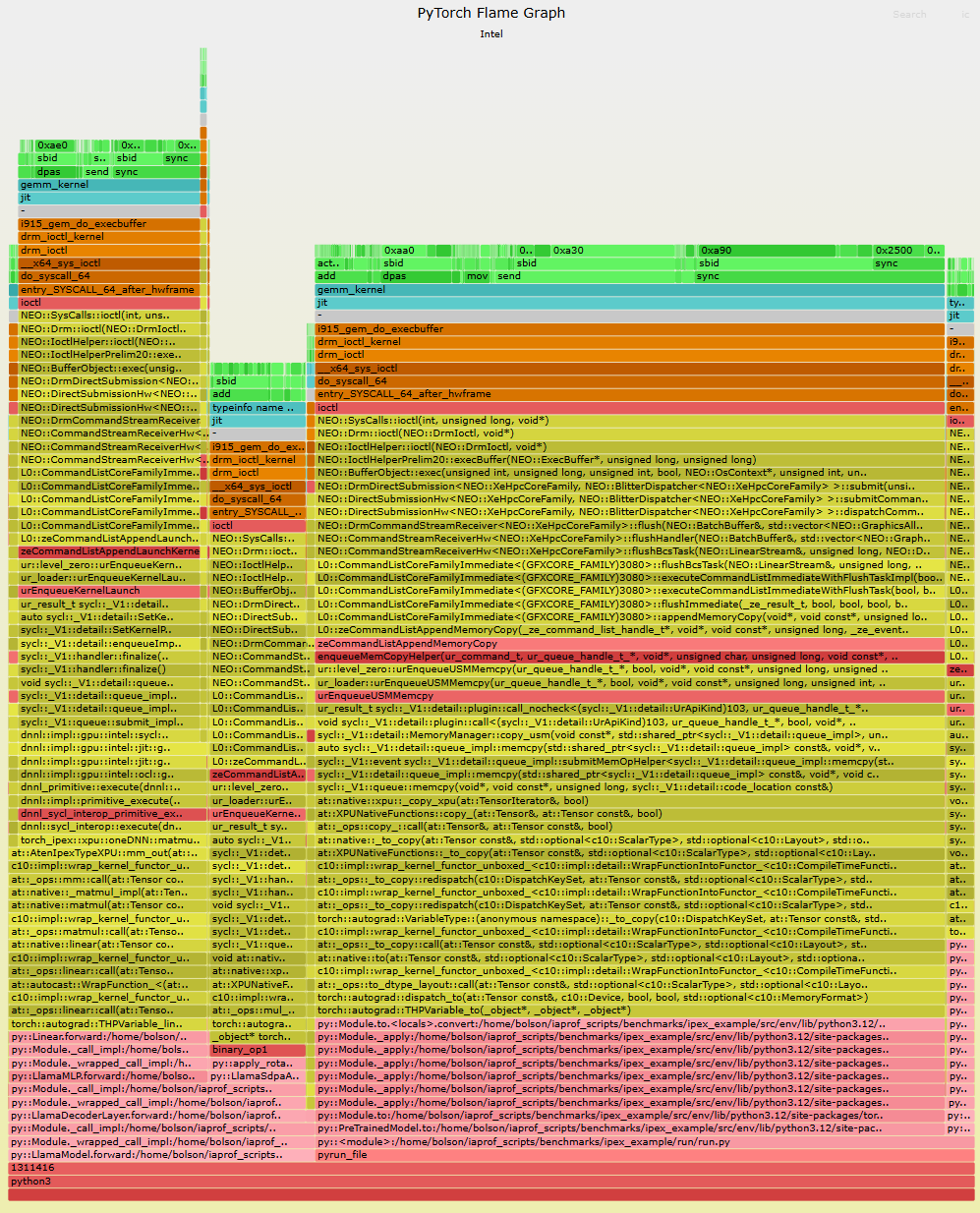

It's still early days for AI flame graphs. Right now when I browse CPU performance case studies on the Internet, I'll often see a CPU flame graph as part of the analysis. We're a long way from that kind of adoption for GPUs (and it doesn't help that our open source version is Intel only), but I think as GPU code becomes more complex, with more layers, the need for AI flame graphs will keep increasing.

I also supported cloud computing, participating in 110 customer meetings, and created a company-wide strategy to win back the cloud with 33 specific recommendations, in collaboration with others across 6 organizations. It is some of my best work and features a visual map of interactions between all 19 relevant teams, described by Intel long-timers as the first time they have ever seen such a cross-company map. (This strategy, summarized in a slide deck, is internal only.)

I always wish I did more, in any job, but I'm glad to have contributed this much especially given the context: I overlapped with Intel's toughest 3 years in history, and I had a hiring freeze for my first 15 months.

My fond memories from Intel include

meeting Linus at an Intel event who said "everyone is using fleme graphs these days" (Finnish accent),

meeting Pat Gelsinger who knew about my work and introduced me to everyone at an exec all hands,

surfing lessons at an Intel Australia and HP offsite (mp4),

and meeting Harshad Sane (Intel cloud support engineer) who helped me when I was at Netflix and now has joined Netflix himself -- we've swapped ends of the meeting table. I also enjoyed meeting Intel's hardware fellows and senior fellows who were happy to help me understand processor internals. (Unrelated to Intel, but if you're a Who fan like me, I recently met some other people as well!)

My next few years at Intel would have focused on execution of those 33 recommendations, which Intel can continue to do in my absence. Most of my recommendations aren't easy, however, and require accepting change, ELT/CEO approval, and multiple quarters of investment. I won't be there to push them, but other employees can (my CloudTeams strategy is in the inbox of various ELT, and in a shared folder with all my presentations, code, and weekly status reports). This work will hopefully live on and keep making Intel stronger. Good luck.

December 04, 2025 01:00 PM

November 27, 2025

There are now multiple AI performance engineering agents that use or are trained on my work. Some are helper agents that interpret flame graphs or eBPF metrics, sometimes privately called AI Brendan; others have trained on my work to create a virtual Brendan that claims it can tune everything just like the real thing. These virtual Brendans sound like my brain has been uploaded to the cloud by someone who is now selling it (yikes!). I've been told it's even "easy" to do this thanks to all my publications available to train on: >90 talks, >250 blog posts, >600 open source tools, and >3000 book pages. Are people allowed to sell you, virtually? And am I the first individual engineer to be AI'd? (There is a 30-year-old precedent for this, which I'll get to later.)

This is an emerging subject, with lots of different people, objectives, and money involved. Note that this is a personal post about my opinions, not an official post by my employer, so I won't be discussing internal details about any particular project. I'm also not here to recommend you buy any in particular.

Summary

- There are two types:

- AI agents. I've sometimes heard them called an AI Brendan because it does Brendan-like things: systems performance recommendations and interpretation of flame graphs and eBPF metrics. There are already several of these and this idea in general should be useful.

- Virtual Brendan can refer to something not just built on my work, but trained on my publications to create a virtual me. These would only automate about 15% of what I do as a performance engineer, and will go out of date if I'm not training it to follow industry changes.

- Pricing is hard, in-house is easier. With a typical pricing model of $20 per instance per month, customers may just use such an agent on one instance and then copy-and-paste any tuning changes to their entire fleet. There's no practical way to keep tuning changes secret, either. These projects are easier as internal in-house tools.

- Some claim a lot but do little. There's no Brendan Gregg benchmark or empirical measurement of my capability, so a company could claim to be selling a virtual Brendan that is nothing more than a dashboard with a few eBPF-based line charts and a flame graph. On some occasions when I've given suggestions to projects, my ideas have been considered too hard or a low priority. Which leads me to believe that some aren't trying to make a good product -- they're in it to make a quick buck.

- There's already been one expensive product failure, but I'm not rushing to conclude that the idea is bad and the industry will give up. Other projects already exist.

- I’m not currently involved with any of these products.

- We need AI to help save the planet from AI. Performance engineering gets harder every year as systems become more complex. With the rising cost of AI datacenters, we need better performance engineering more than ever. We need AI agents that claim a lot and do a lot. I wish the best of luck to those projects that agree with this mantra.

Earlier uses of AI

Before I get into the AI/Virtual Brendans, yes, we've been using AI to help performance engineering for years. Developers have been using coding agents that can help write performant code. And as a performance engineer, I'm already using ChatGPT to save time on resarch tasks, like finding URLs for release notes and recent developments for a given technology. I once used ChatGPT to find and old patch sent to lkml, just based on a broad description, which would otherwise take hours of trial-and-error searches. I keep finding more ways that ChatGPT/AI is useful to me in my work.

AI Agents (AI Brendans)

A common approach is to take a CPU flame graph and have AI do pattern matching to find performance issues. Some of these agents will apply fixes as well. It's like a modern take on the practice of "recent performance issue checklists," just letting AI do the pattern matching instead of the field engineer.

I've recently worked on a Fast by Friday methodology: where we engineer systems so that performance can be root-cause analyzed in 5 days or less. Having an AI agent look over flame graphs, metrics, and other data sources to match previously seen issues will save time and help make Fast by Friday possible. For some companies with few or no performance engineers, I'd expect matching previously seen issues should find roughly 10-50% performance gains.

I've heard some flame graph agents privately referred to as an "AI Brendan" (or similar variation on my name) and I guess I should be glad that I'm getting some kind of credit for my work. Calling a systems performance agent "Brendan" makes more sense than other random names like Siri or Alexa, so long as end users understand it means a Brendan-like agent and not a full virtual Brendan. I've also suspected this day would come ever since I began my performance career (more on this later).

Challenges:

- Hard to quantify and sell. What the product will actually do is unknown: maybe it'll improve performance by 10%, 30%, or 0%. Consider how different this is from other products where you need a thing, it does the thing, you pay for it, the end. Here you need a thing, it might do the thing but no one can promise it, but please pay us money and find out. It's a challenge. Free trials can help, but you're still asking for engineering time to test something without a clear return. This challenge is also present for building in-house tools: it's likewise hard to quantify the ROI.

- The analysis pricing model is hard. If this is supposed to be a commercial product (and not just an in-house tool) customers may only pay for one server/instance a month and use that to analyze and solve issues that they then fix on the entire fleet. In a way, you're competing with an established pricing model in this space: performance consultants (I used to be one) where you pay a single expert to show up, do analysis, suggest fixes, and leave. Sometimes that takes a day, sometimes a week, sometimes longer. But the fixes can then be used on the entire fleet forever, no subscription.